SubT-MRS: Pushing SLAM Towards All-weather Environments

Simultaneous localization and mapping (SLAM) is a fundamental task for numerous applications such as autonomous navigation and exploration. Despite many SLAM datasets have been released, current SLAM solutions still struggle to have sustained and resilient performance. One major issue is the absence of high-quality datasets including diverse all-weather conditions and a reliable metric for assessing robustness. This limitation significantly restricts the scalability and generalizability of SLAM technologies, impacting their development, validation, and deployment.

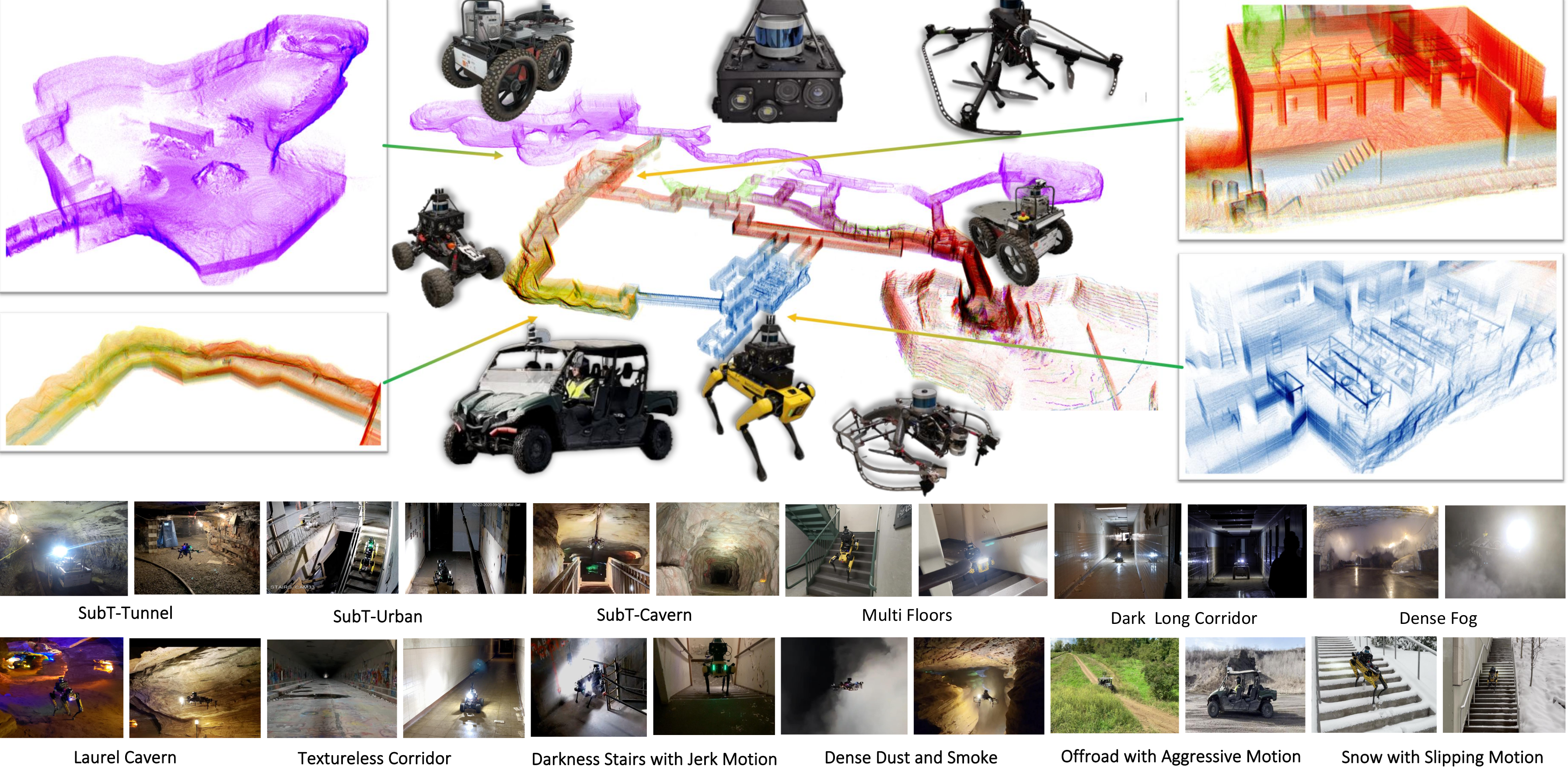

To bridge this gap and push SLAM towards all-weather environments, we present an extremely challenging dataset, SubT-MRS, including scenarios featuring various sensor degradation, aggressive locomotions, and extreme-weather conditions. The SubT-MRS dataset comprises 3 years of data from the DARPA Subterranean (SubT) Challenge (2019-2021) and extends with an additional 2 years of diverse environments (2022-2023), containing mixed indoor and outdoor settings, including long corridors, off-road scenario, tunnels, caves, deserts, forests, and bushlands.

Cumulatively, this forms a 5-year dataset encompassing over 2000 hours and 300 kilometers of terrain subjected to multimodal sensors including LiDAR, fisheye cameras, depth cameras, thermal cameras, and IMU; heterogeneous platforms including RC cars, legged robots, aerial robots, and wheeled robots; and extreme obscurant conditions such as dense fog, dust, smoke, and heavy snow.

Citation

Please read our paper for details.

@InProceedings{Zhao_2024_CVPR,

author = {Zhao, Shibo and Gao, Yuanjun and Wu, Tianhao and Singh, Damanpreet and Jiang, Rushan and Sun, Haoxiang and Sarawata, Mansi and Qiu, Yuheng and Whittaker, Warren and Higgins, Ian and Du, Yi and Su, Shaoshu and Xu, Can and Keller, John and Karhade, Jay and Nogueira, Lucas and Saha, Sourojit and Zhang, Ji and Wang, Wenshan and Wang, Chen and Scherer, Sebastian},

title = {SubT-MRS Dataset: Pushing SLAM Towards All-weather Environments},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2024},

pages = {22647-22657}

}

Acknowledgments

This work was supported by DARPA

Contact

shiboz@andrew.cmu.edu